How we manipulated Locust to test system performance under pressure

The dramatic increase in Learnosity users during the back-to-school period each year challenges our engineering teams to find new approaches to ensuring rock-solid reliability at all times.

Stability is a core part of Learnosity’s offering. Prior to back-to-school (known as “BTS” to us) we load-test our system to handle a 5x to 10x increase on current usage. That might sound excessive, but it accounts for the surge of first-time users that new customers bring to the fold as well as the additional users that existing customers bring.

Since the BTS traffic spike occurs from mid-August to mid-October, we start preparing in March. We test our infrastructure and apps to find and remove any bottlenecks.

Last year, a larger client ramped up their testing. This created 3x usage of our Events API. In the process, several of our monitoring thresholds were breached and the message delivery latency increased to an unacceptable level.

As a result, we poured resources into testing and ensuring our system was stable even under exceptional stress. To detail the process, I’ve broken the post into two parts:

- Creating the load with Locust (this piece)

- Running the load test (in part two, coming soon).

TL;DR

Here’s a snapshot of what I cover in this post:

- Our target metrics.

- How we wrote a Locust script to generate load for a Publish/Subscribe system.

- Our observations that:

- The load test must reflect real user behaviors and interactions

- Load testing alone doesn’t validate system behavior against target metrics. It’s better to measure this separately while the system is under load.

A bit about our system and success metrics

For context, our Events API relies on an internal message-passing system that receives Events from publishers and distributes them to subscribers. We call it the Event bus.

This API is at the core of our Live Progress Report, which enables proctors to follow and control test sessions for multiple learners at once. Clients (both proctors and learners) publish and subscribe to topics identified by IDs matched to the learners’ sessions.

There are two streams, one for messages in each direction:

- Logging, from many learners to one proctor

- Control, from one proctor to each learner

The Event bus terminates a subscriber’s connection whenever messages matching the subscription are delivered or after 25 seconds have passed. As soon as one connection is terminated, the client establishes a new one. Where there are backend errors, clients will wait before trying to reconnect so as not to create cascading failures.

Because proctors need to follow learners closely as they take a test, the event delivery has to be snappy. This informs our criteria for load test success or failure:

- We want to deliver 95% of messages in 2 seconds or less

- Messages should only be delivered once per subscriber

- Any messages that take over 15 seconds to deliver are considered lost

Keeping these targets in mind, here’s how we load tested our Event bus.

Loading the system

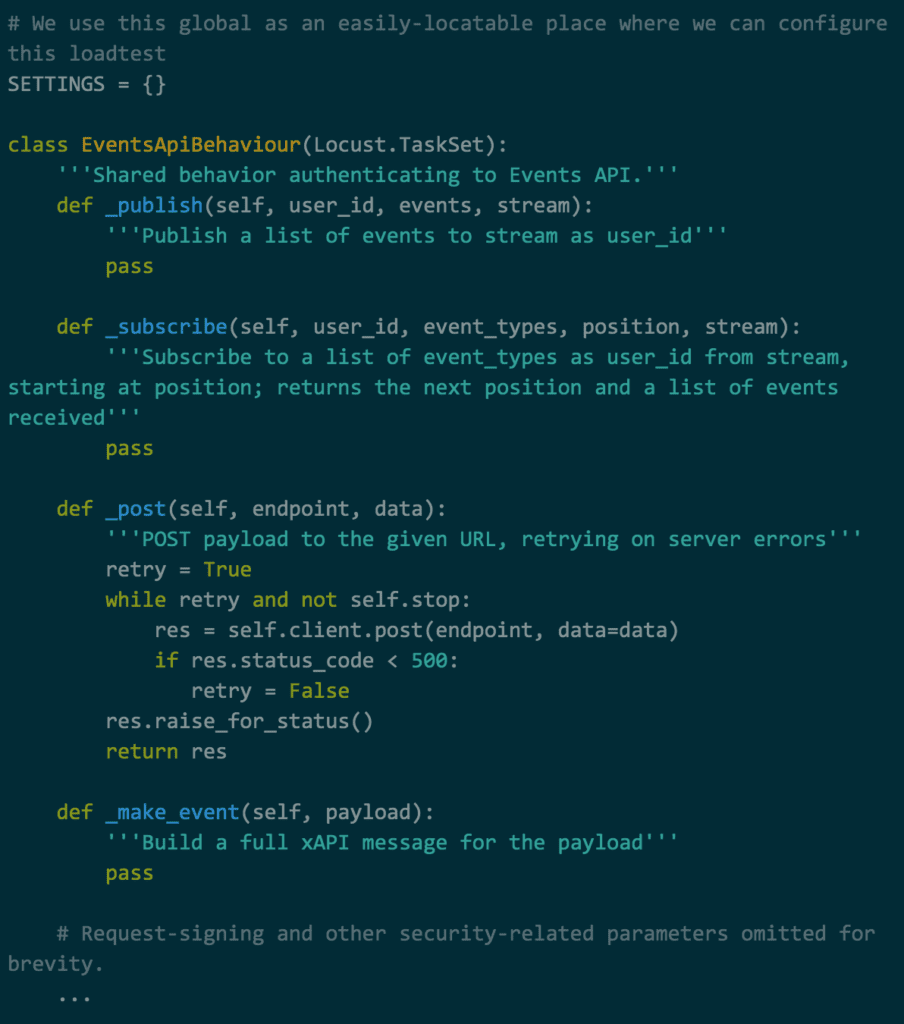

Note: The code below has been edited for brevity and is meant to illustrate the ideas of this post, not to run out of the box.

Applying the load with Locust

Locust is an open-source load testing tool that gauges the number of concurrent users a system can handle. Testers can write simple behaviors using Python before using Locust to simulate user “swarms”.

Locusts in the swarm have one or more behaviors (TaskSets) attached to them. They randomly choose one at a time according to the different weights associated with each. For example, a login page might be given less weight than a popular forum thread due to the volume of visits they receive.

Locust is a powerful tool for single-user website load tests that lends itself well to more complex load-testing scenarios – provided you bring in-depth knowledge of what system behaviors you’re looking to test.

Though it works well for simple user-website loads, it doesn’t reflect the more sophisticated interactions between our Events API users and Event bus. Both user types – proctors and students – use the Events API, but their publish and subscribe rates vary:

- Learners publish to a topic with their own session ID at short intervals and subscribe to the same topic for instructions from the proctor.

- Proctors subscribe to as many topics as monitored learners (their session ID) and occasionally publish one or more control messages to some students.

This highlights one of the big differences between Learnosity’s use case and a simple website test: we need publishers and subscribers interacting with the same topic at roughly the same time.

We also need our tasks to establish two connections in parallel: the long-running subscribe polls, and the short-lived publishes. Finally, we need to work out realistic values for the various time intervals.

Getting locusts to behave

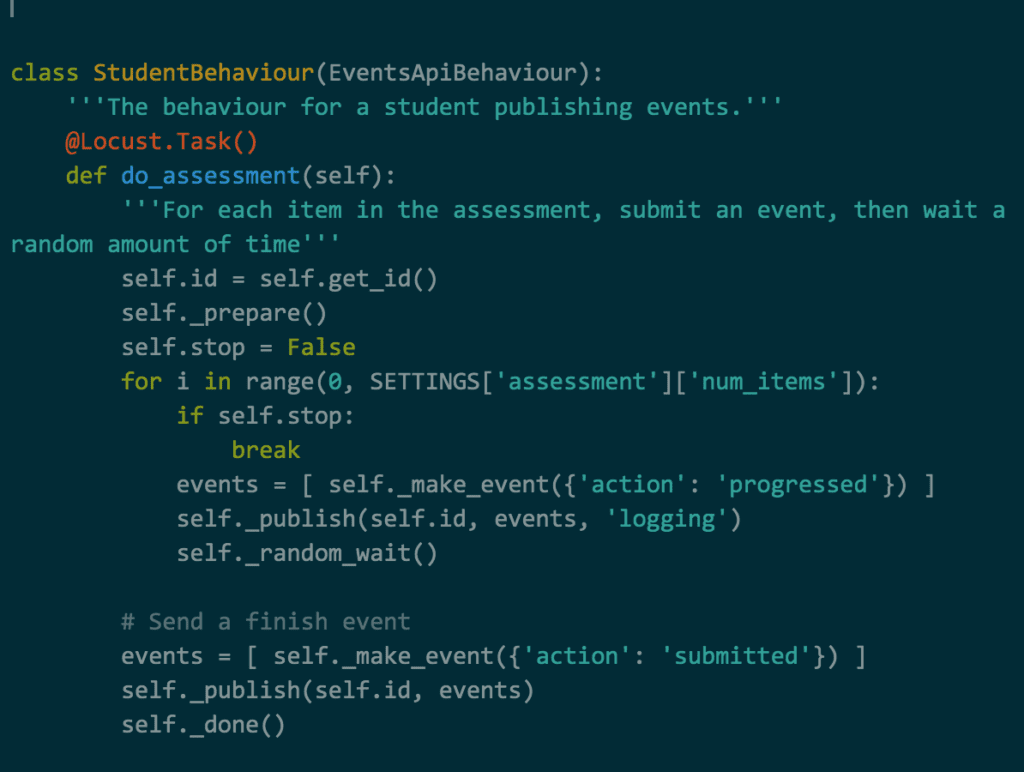

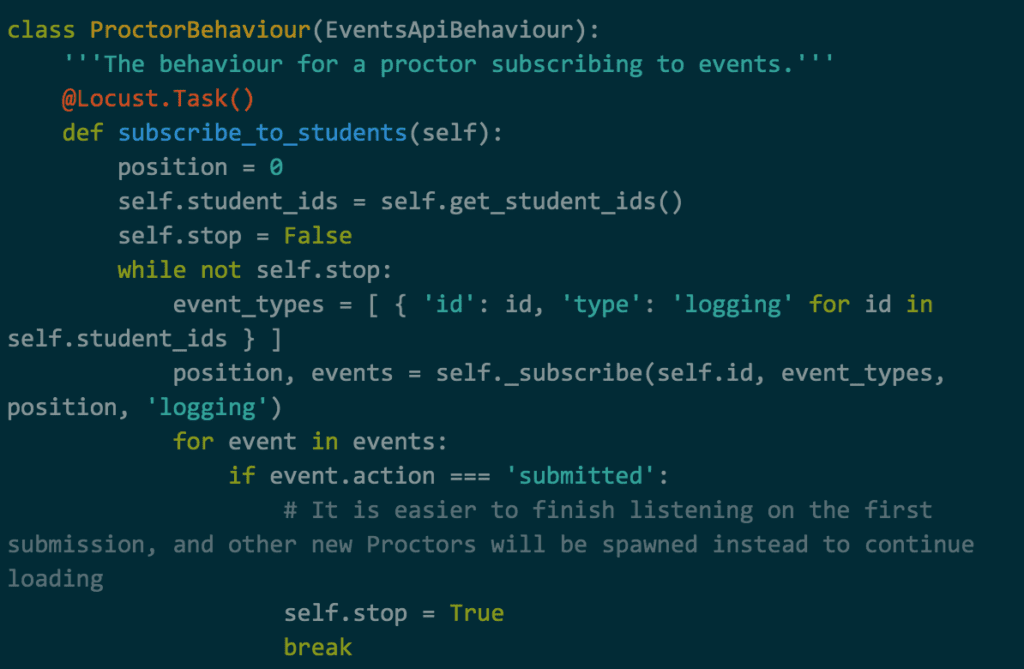

Given the state we needed to maintain during both learner and proctor sessions, we opted for single-task behaviors where a learner runs through a set number of Items before finishing their assessment while a proctor subscribes to a set number of learners and waits until they have finished before terminating.

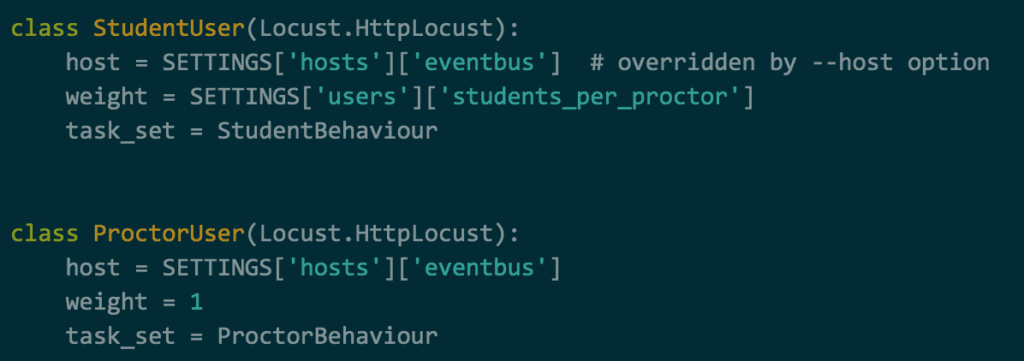

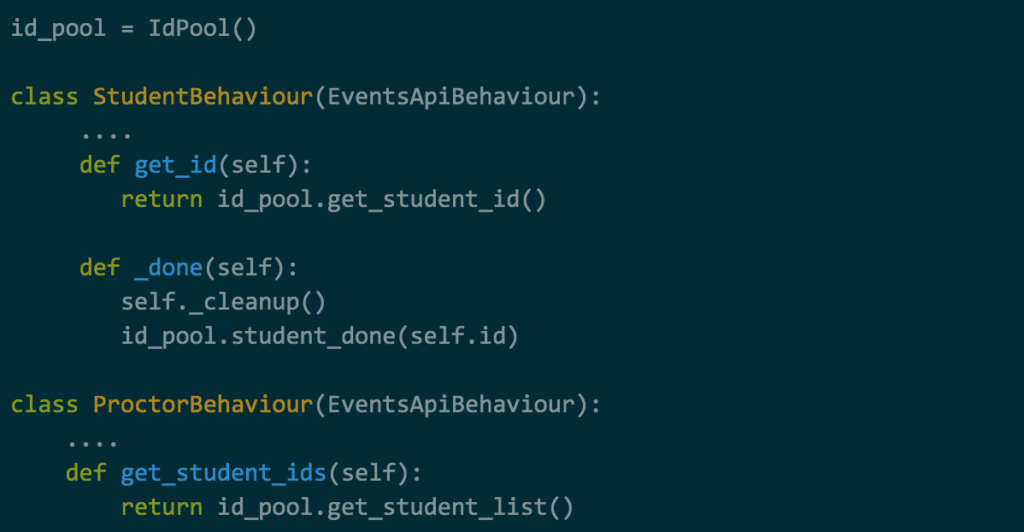

Both our StudentBehaviour and ProctorBehaviour objects inherit from a EventsApiBehaviour class derived from locusts’ TaskSet. They’re both defined and used in the same test script.

This lets us give tasks different weights so that more locusts are created with the StudentBehaviour TaskSet than with the ProctorBehaviour.

Synchronizing proctors and students

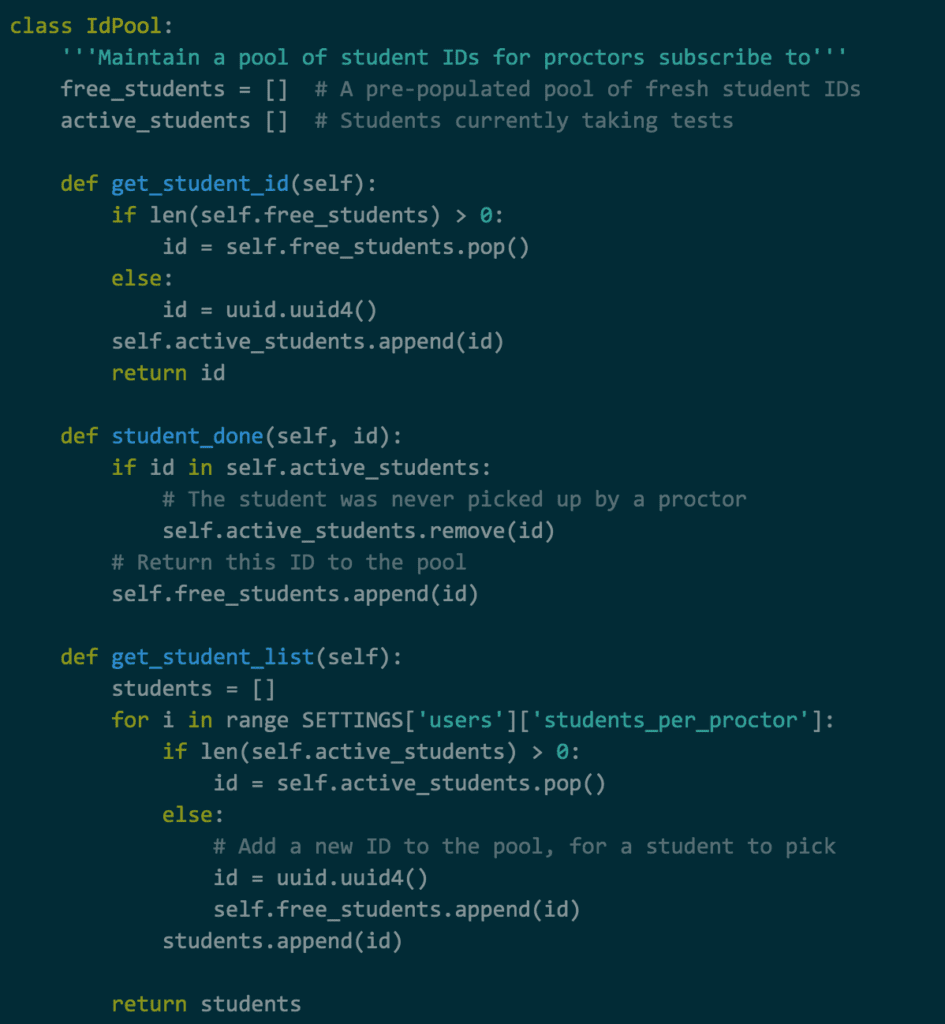

We want proctors to subscribe to active students. Otherwise, they will loop forever waiting for events that will never come. We can achieve this with a shared pool of user IDs. Each newly spawned student requests an ID from the pool, and each new proctor requests a list of IDs of those new students who haven’t been distributed yet.

The pool consists of a list of free student IDs (from which new students pick theirs) and a list of active student IDs, which new proctors take and remove a slice from. In case either list is empty, a new ID is generated and placed in the appropriate list for a user of the other type to select. When a student is finished, its ID is returned to the pool.

With this pool in place, both proctors and students can agree on a set of IDs to use.

This gives us a functional loadtest.

We can target different environments with the –host argument, which overrides the host value in HttpLocust classes. This value can also be accessed as self.Locust.host in the Locust.TaskSet. This is useful for us, as it allows us to derive the base URLs for other APIs, which we need for various authentication purposes.

Though the script above creates a substantial load, it doesn’t reflect what we see from real customers.

- The load test spirals out of control as soon as the system is overloaded: publications and subscriptions fail and retry immediately, which puts an unrealistic load on the system.

- Learners should also subscribe to control commands from proctors.

- Stopping the load test doesn’t stop the locusts from continuing to load the system.

Modifying the load to match real-life use

To meet the characteristics of real client traffic, we needed to find a way to replicate these behaviors. So we made a few refinements.

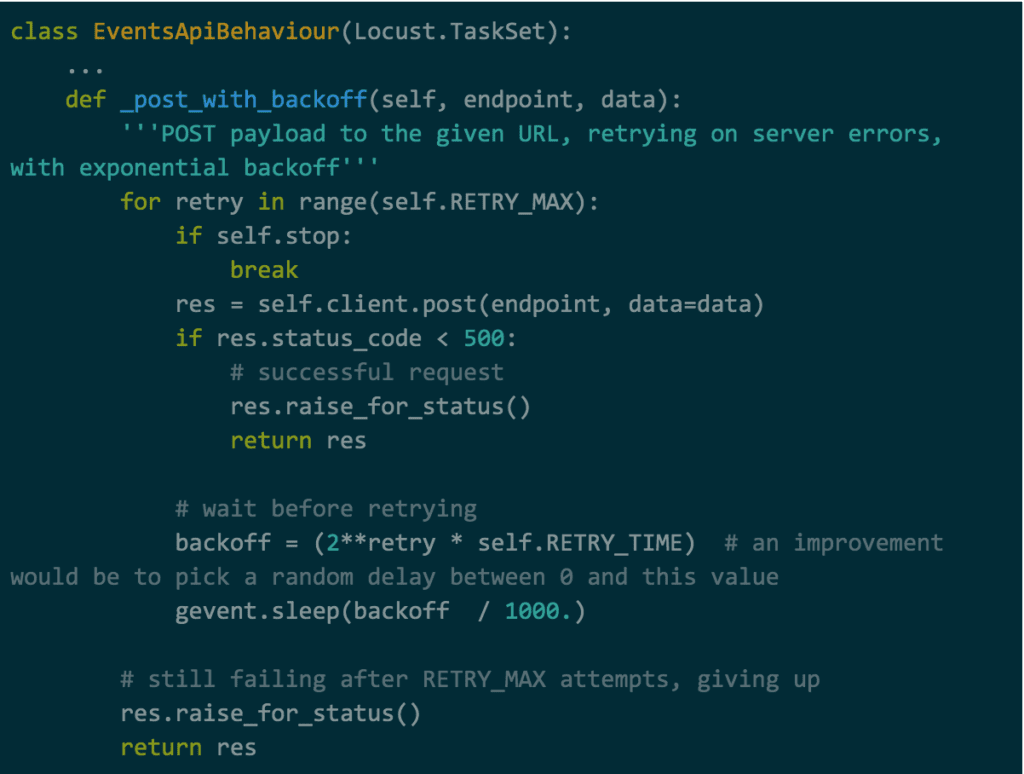

Refinement 1: Retry with backoff on error

When applying too much load, the naive retry mechanism in EventsApiBehaviour._post creates a thundering herd: connections are recreated immediately on failure and the server cannot recover from a transient overload.

This is a good example of the degree of realism that a load test needs to have. In this case, it’s just a matter of implementing the same backoff strategy the Events API already has.

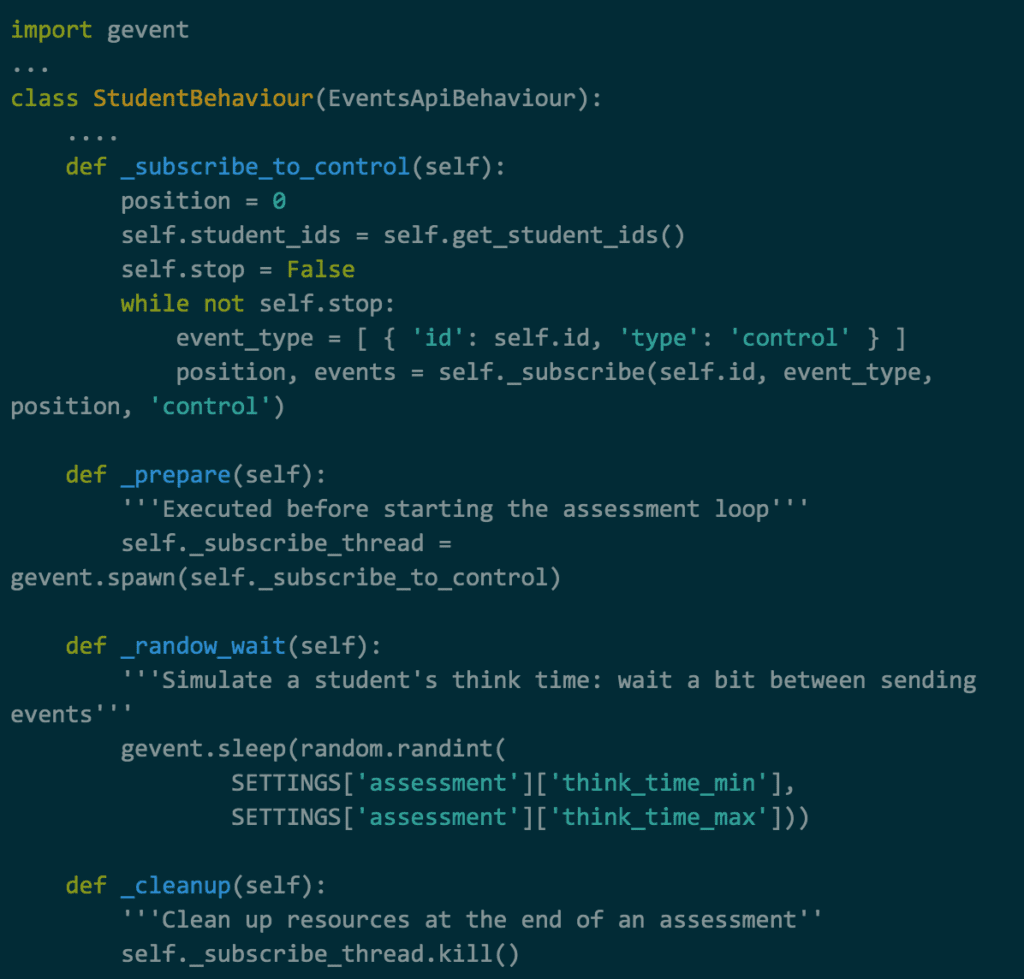

Refinement 2: Subscribe students to the control stream

The loop for learners is pretty simple: send an event, then wait.

To reflect real-life use cases though, learners should also wait for commands from the proctor. This is important because it creates lots more connections to the system. Even though inactive, these connections still use server resources.

Fortunately, Locust plays well with gevent, which lets us run functions and handle the subscription in parallel with little fuss, while the main loop continues to publish new events.

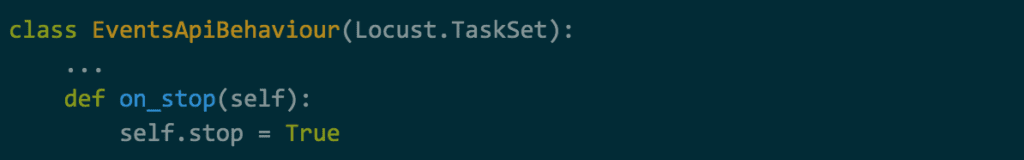

Refinement 3: Terminate cleanly for clients

One remaining issue was that terminating the load test from the Locust interface would result in customers running through the loop of their own TaskSet.

To avoid this we needed to inform the TaskSet to immediately terminate customer loops, ignoring the rest of the assessment and canceling retries. Fortunately, the loops discussed above are conditioned on self.stop not being True. Locust provides the missing piece in the form of the on_stop method, which it calls on all the running TaskSets when terminating.

Confirming system behavior

What’s described above gives us a realistic load test that we can start and stop at will – but that’s just part of the job. The next step is verifying that the system performs as we want it to under this load.

While Locust can provide some statistics about successful and failed connections, it doesn’t capture the data that’s most relevant to our targets such as lost and duplicate messages or long delivery delays between publishers and subscribers.

To fix this we wrote a separate application that sends and receives messages to collect those metrics during load-testing.

An important additional task at this point is to keep an eye on the health of the underlying system. To make sure everything was operating smoothly we ran checks to see if the nodes running the Event bus were under too much stress (from CPU load, memory pressure, connection numbers, etc.). We also tracked logs from the system, web server, and Event bus to identify the root causes of any issues we spotted.

Flexibility is often a state of mind (and expertise)

Locust is a powerful tool for single-user website load tests that also lends itself well to more complex load-testing scenarios – provided you bring in-depth knowledge of what system behaviors you’re looking to test and what kind of traffic your system receives.

To mimic the user behavior patterns we experience at Learnosity, we had to go well beyond the basic script used in more straightforward load tests.

This isn’t a challenge anyone should take lightly.

Prior to running the load test for real, we went through multiple rounds of trial and error in developing a sufficiently realistic script. The code presented above represents only the end result; it doesn’t reflect the amount of time and effort it took to build it up – either for the basic behavior or the subsequent refinements.

Our efforts in ensuring reliability at scale might not be glamorous, but they’re worth sharing. Reflecting on our process helps us refine it, while documenting the experience may help other engineering teams facing a similar challenge.

In part two of this series, I’ll look at the (nerve-shredding) next step in the process: running the tests.